Information Architecture Research Case Study:

How a Card Sort Helped a Top Financial Firm Create an Intuitive IA

While challenging to do right, a card sort study is the best starting point for generating a user-centered information architecture. Here’s the 12-step process we used for a Fortune 500 content hub — and the challenges we overcame.

The Challenge: The Marketer-Designed IA

Years ago, a marketing team at a well-known enterprise financial company was working with their IT group to create a content microsite. The site would house informational and brand-focused content, which had previously been scattered across various websites, newsletters, and blogs.

The team had 125 content pieces ready to go and plans to create thousands more. The site would have its own navigation, and the team needed a way to organize the content that made sense to users.

They contacted us to lead information architecture research with users.

The problem: the team already had strong opinions about how the IA should be structured and labeled, none of which were informed by user research. On our initial call, they told us:

We just want some research to help us pick a winner among our 3 existing ideas, maybe with some tweaks. Ideally we want this done within a week or two.

We responded with a simple question:

What if the best IA for customers looks nothing like the 3 versions that you’ve come up with on your own?

After a long silence, we continued:

If we limit ourselves to a quick round of IA testing, we won’t have an opportunity to come up with something truly human-centered if the existing versions perform poorly.

Our hunch, we told them, was that the existing IA concepts would cause many findability problems for users. We nicely said:

You are marketers that live in this content every day. A structure that makes sense to you will likely trip up the average consumer who only needs this type of content occassionally.

There was a more obvious problem with the existing drafts.

Your category names sound cute and clever; for example, one version uses “Live | Laugh | Learn”. While this might work well in a marketing campaign, when it comes to web navigation it’s likely to cause confusion. Your users are trying to quickly find information or complete a task. To support them, your best bet is to use plain language that matches the words they use.

How do you discover the language of your customers? How do you understand the way they approach this content? That brings us to …

The Solution: Card Sort Study

After some back and forth, the project team agreed to a 1-month research and design project that started with a card sort study and later tested the IAs through multiple rounds of tree testing, with design iterations between each round.

In this case study, we cover the most challenging phase of the project: designing and running the card sort study.

In a card sort, we present users with a collection of cards that represent content or functionality; we ask users to group the cards in ways that make sense to them and then label the groups. After watching enough users go through the process, thinking aloud as they go, we’re in a great position to generate intuitive IAs.

Running a card sort is difficult. But it’s the best starting point for creating a usable IA. Here are the steps we went through for this project, along with some of the challenges that we overcame.

Our 12-Step Card Sort Process

We started this project with a quick discovery phase that included a kickoff meeting and a review of relevant resources. As with most of our UX research studies, our primary goals in this phase were to:

- Align with project stakeholders on project goals, research questions, and measures of success

- Develop an understanding of the target audiences and of the core user goals, scenarios, and tasks.

With this critical foundation in place, we were ready to move onto the unique steps of this research method.

1. Review content inventory.

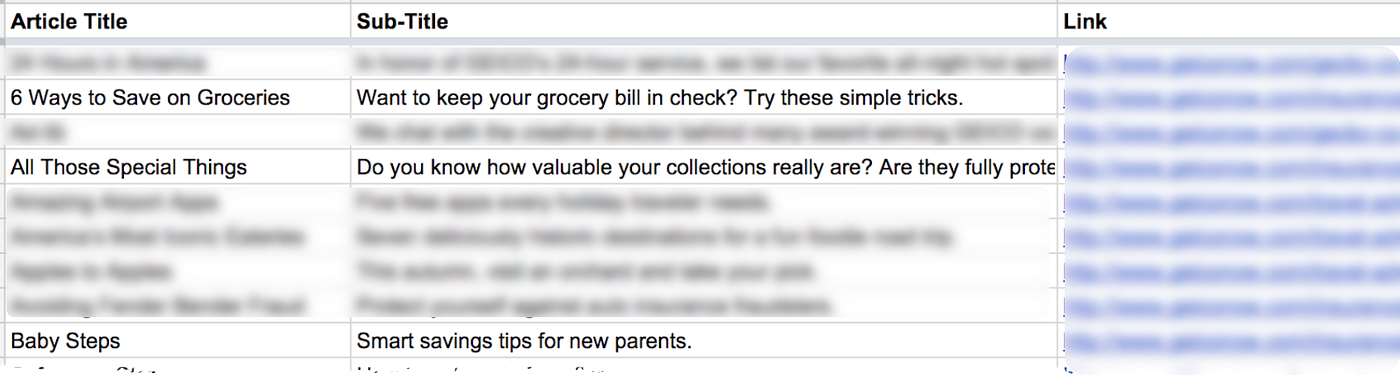

The marketing team sent us a content inventory spreadsheet with existing content and some future content. The spreadsheet included article titles, sub-titles, and links to the actual articles that existed. We spent a good chunk of time reading the articles.

2. Select representative content.

Out of 125 potential content pieces, we picked 100 for the card sort. Often we aim for 30 to 50 cards; in this case, the content was relatively easy to understand and group, so we went higher. The 25 we removed were already well represented in the 100 articles we had.

We made sure that all of the cards were at the same bottom “level”; i.e. we didn’t mix article pages with category pages.

3. Get feedback on content and revise.

We shared the proposed list of articles with the project team and asked if it was a representative sample of future content. We made some revisions based on their feedback.

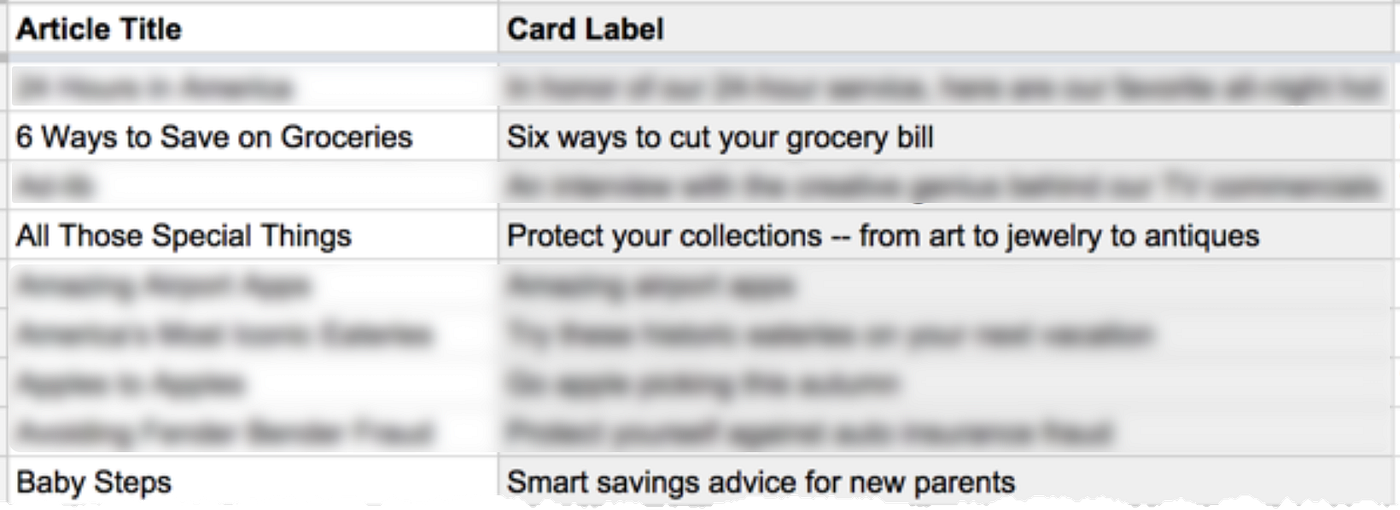

4. Write card labels.

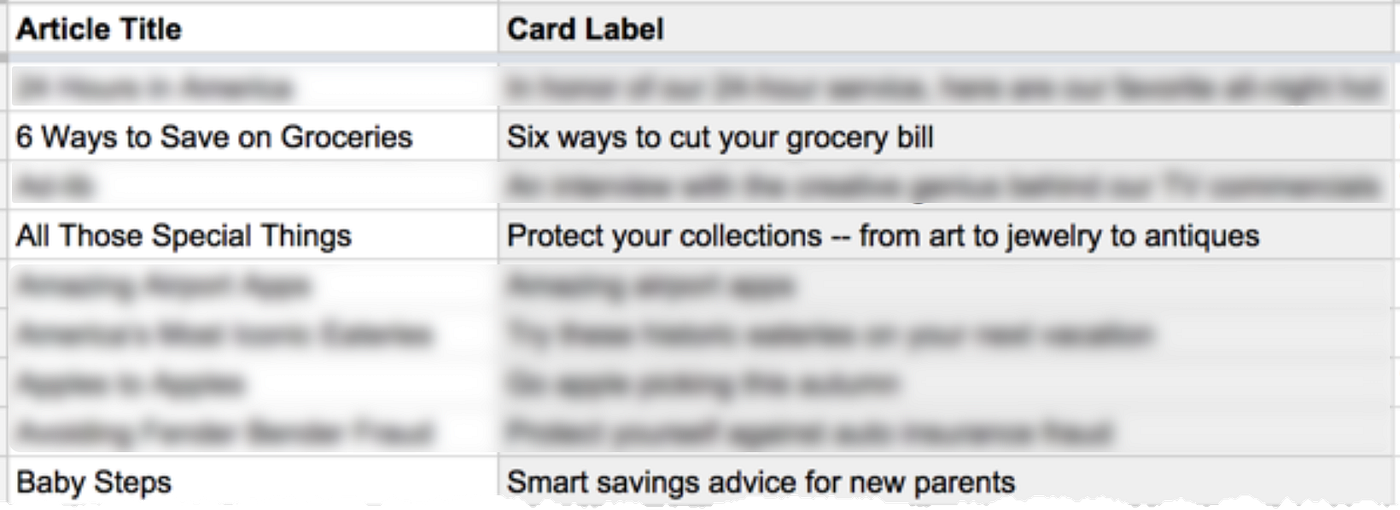

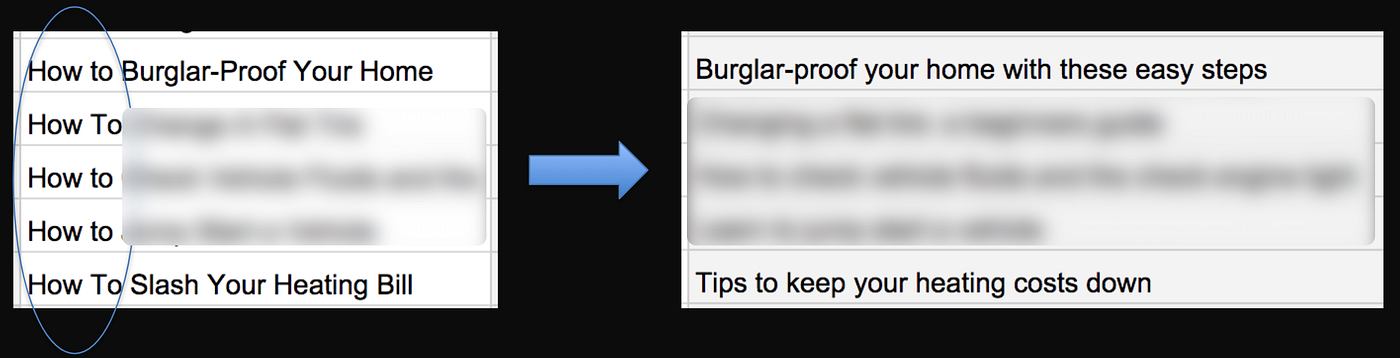

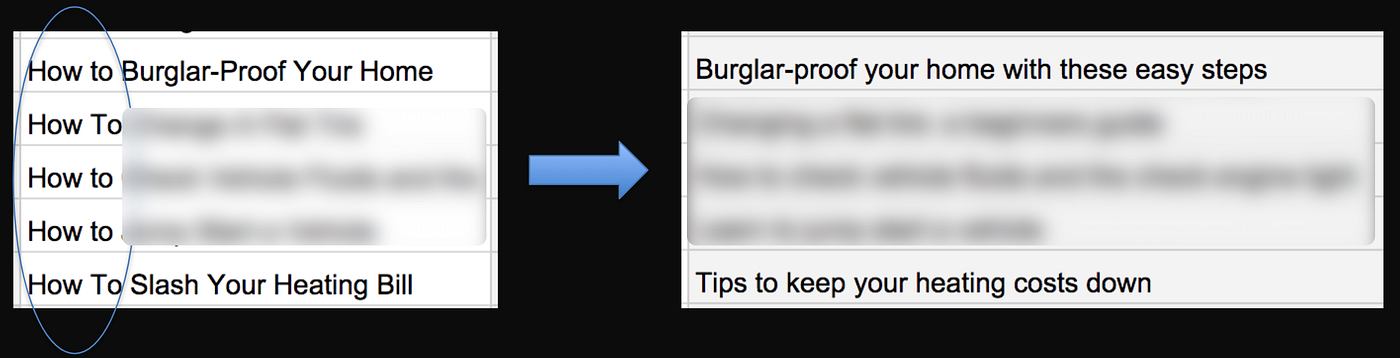

While it would have been nice to reuse the article titles as the card labels, we ended up rewriting nearly all 100 of them to 1) be easy for participants to understand, 2) accurately represent content, and 3) spell out any acronyms or jargon. For most articles, we used the sub-title as the starting point since those were more descriptive than the titles.

5. Fix biasing labels (don’t skip this!).

It’s very easy to bias participants in a card sort study and end up with categories that are less about how users approach the concepts and more about their keyword- and pattern-matching abilities. Since unchecked card labels can result in poor IAs, removing label bias is one of the most important jobs in designing a card sort study.

It’s very easy to bias participants in a card sort study with common keywords or patterns in card labels.

We reviewed the list of cards and spotted common words used across labels. We then found synonyms or alternate phrasings for those words. For example, “quiz” appeared on 5 of the card labels. If we’d left them as is, many users would have grouped “quiz” cards together even if the underlying content didn’t go together in users’ minds. So we replaced one “quiz” with “exam”, turned another label into a “what do you know about …?” question format, and so on.

We also looked for common structures in the cards. For example, cards containing “ideas for homeowners” and “tips for parents” followed this pattern:

{Resource} for {Audience}

Left as is, many users would have grouped those cards together purely based on the structural similarities, regardless of the content similarities. So we mixed up the word order and structures of those cards, e.g. “how parents can …”.

These types of changes may feel counterintuitive to you as a researcher, designer, or writer. After all, your job is typically to make tasks easier for users. But as Jakob Nielsen points out:

“Card sorting isn’t a user interface design; it’s a knowledge elicitation exercise to discover users’ mental models. [Your study] has to make users work harder and really think about how they’d approach the concepts written on the cards.”

6. Get feedback on card labels and revise.

Just as we’d done with the content list, we shared the card labels with the project team — along with explanations and instructions — and captured their feedback. We also shared the list of cards with a couple of people unfamiliar with the project and asked them to tell us what each card meant. This helped us to identify and fix card labels that were vague or misleading.

7. Run a pilot study and revise (don’t skip this!).

We ran a pilot card sort with 2 participants. Pilots are always a good idea in user research, and they’re especially important when your study includes a quantitative component that becomes expensive to redo if you spot study design problems later on.

Based on what we saw in our pilot sessions, we reworded more labels to further reduce common words and needless words. We also removed 25 more cards that now seemed unnecessary and made the sort easier to do in our target time of 15 to 20 minutes.

After numerous iterations, we now had 75 cards that we felt good about in terms of representativeness, descriptiveness, clarity, and lack of bias. We were ready for the real study.

8. Recruit participants.

Recruitment was relatively easy for this study because the microsite’s target market was consumers of a widely-used line of products. We lined up 55 participants that we sourced from a research panel and screened with a few questions.

9. Run qualitative sessions.

We ran 5 users through a remote think-aloud card sort, one at a time. Hearing their thoughts allowed us to understand why people group certain topics together and, more generally, it helped us to see the content from a user’s point of view.

10. Run quantitative sessions.

We ran 50 users through a remote unmoderated card sort, one at a time. We couldn’t hear what these participants were thinking, but we now had a more robust set of data to spot patterns and validate our moderated session insights. We now had the right mix of qualitative and quantitative.

11. Analyze results.

For the qualitative sessions, we captured observations on how participants grouped the content, and then we looked for patterns in our observations.

We ran the quantitative card sort through OptimalSort software, which provides great starting tools for analysis. As good as these tools are, they don’t hand us the best IA designs. Alongside qualitative data, they give us insights that inform our designs.

Card sort analysis doesn’t give us the IA design. It gives us insights that inform our designs.

We focused much of our quant analysis on standardizing categories — a tedious process that produces the most accurate picture of user-generated categories and category names. We then added some insights from two data visualizations: the dendrogram and the similarity matrix.

12. Generate new IAs.

Armed with our analysis, we were ready to propose changes to the project team’s proposed IAs, and generate new IAs. After some back-and-forth with the project team, we ended up with 3 IAs.

Two of these were completely new versions. The third IA was adapted from the best option from the marketing team’s original drafts. We improved the labels but left the organization mostly untouched. Since it was the closest thing we had to a control version, we referred to this as “the original IA”.

Now we were ready to test our 3 IAs with a new set of users.

The Result: 75% Increase in Findability Plus Design Durability

To evaluate an IA, tree testing is our method of choice. We ran an A/B/C tree test with our 3 IAs. We recruited 540 participants and randomly assigned each person to one of the three IAs — giving us about 180 participants per IA. We randomly gave each user a subset of tasks from a total set of 18 tasks. As with the card sort, we ran both qualitative and quantitative sessions, giving us the right mix of insights and confidence.

Based on what we observed in tree testing, we picked the 2 top-performing IAs and made improvements to each of them. We then ran a final test with our 2 updated finalists — recruiting 360 new participants and giving them the same tasks. We compared the performance of the finalists against each other and against the 3 IAs we tested initially.

The top-performing IA outscored the original IA by 75% in terms of content findability.

By the end of the tree testing process, we had tested 5 user-centered IA variations with 900 users. The top-performing IA outscored the original IA by 75% in terms of content findability.

A couple of months later, the IT team launched the microsite with the top-performing IA.

This project and product launch took place 8 years ago.

Today, the microsite is still there. Its content has grown exponentially. The web ecosystem it sits within has completely changed. No one remains from the original product team.

Follow-up user research has led to redesigns. And yet, the IA’s organization and category names have barely changed from the version we landed on years ago.

With the help of one card sort study, the team was able to transform its company-centered IA into a user-centered IA that has stood the test of time.

More Case Studies

Improving a UN HR Portal’s Findability through IA Research

A large United Nations agency wanted to help its 2,000+ employees find HR-related content more easily. We led a card sort to generate a new information architecture. We then tested and improved the IA through a first-click test.

Improving a Smithsonian Website’s Findability with Tree Testing

The findability of digital content is critical at the world’s largest museum, education, and research complex. Early in the redesign process of the Smithsonian Global website, we led IA research that pointed the way toward a more intuitive navigation.

About the Project

- Industry: Financial services

- Platform: Website

- Audience type: Consumers

- Research area: UX

- Methods/tools: IA research (card sort, tree testing)

- Length: 1 month

- Stakeholder: Marketing, UX, and content teams

- Company size: Enterprise