Healthcare Case Study:

How a Website Survey Gave UVA Health Actionable UX Insights

How do you launch a website survey that captures actionable data without annoying users? Here’s the step-by-step process we used for a regional health system along with the keys to our survey’s success.

Background

Poorly-designed surveys are everywhere on the web, in part because tools make it easy to throw one up with lots of questions. Most website surveys annoy users and fail to capture data that results in user experience improvements. At best, some of the data ends up in a presentation or dashboard.

But done right, web surveys can have a real UX impact. On a recent project, we ran 2 short surveys that achieved actionable insights at a minimal cost to the user experience.

The setting was a UX research study for UVA Health, a large regional health system that includes one of the top-ranked hospitals and medical schools in the U.S. Their team recently migrated the public-facing UVA Health website to a new platform. As part of the migration, the team condensed several subdomains into one, flattened the information architecture, and increased the focus on search (for doctors, locations, and services).

Initial data suggested that the new website’s users were hitting UX barriers while trying to complete core tasks. UVA Health partnered with Marketade to conduct a mixed-methods usability study lasting about 6 weeks.

Our primary research was moderated usability testing with a small sample of patients and caregivers (learn more: Website Usability Testing with Patients for UVA Health). To supplement our qualitative research, we gathered data from 2 large-scale website surveys.

Here’s an overview of our process and takeaways.

Step #1: Pilot, Iterate & Launch

Every website is different, as is every website audience. We started by piloting a few surveys that we’ve found to be effective at both measuring UX and collecting qualitative responses on similar websites. We then tweaked the question wording and display criteria until we saw a strong response rate on the UVA Health site. We settled on these 2 surveys.

Ease-of-Use Survey

With this survey, we started with a simple, multiple-choice ease-of-use question. For those who selected “Difficult”, we asked an open-ended “why” follow-up. We served this to users as a popup after they’d visited 4 pages on desktop or 3 pages on mobile.

Task Survey

In the 2nd survey, we flipped things. We started with an open-ended task question and followed it with a multiple-choice question. We served this to users as they were about to leave the site — an exit-intent survey. (We used Qualaroo to run our surveys because it’s one of the few tools we’ve found with good exit-intent technology.)

These surveys worked well because:

- They’re short, with a max of 2 questions each. This helped to maximize the response rate and minimize user annoyance. In both surveys, we still gained useful data even when users skipped the second question.

- They gave us both quantitative and qualitative data.

- In combination, we gained a good picture of both common tasks and top frustration reasons, and the relationship between the two.

Step #2: Analyze

Once we received 600 responses, we turned the surveys off and started our analysis. To analyze the results, we:

- Gathered all survey responses in a single spreadsheet

- Calculated some ease-of-use metrics

- Filtered the list to responses with likely task failure or frustration

- Manually categorized the task failure and frustration reasons

- Counted the responses within each of those categories

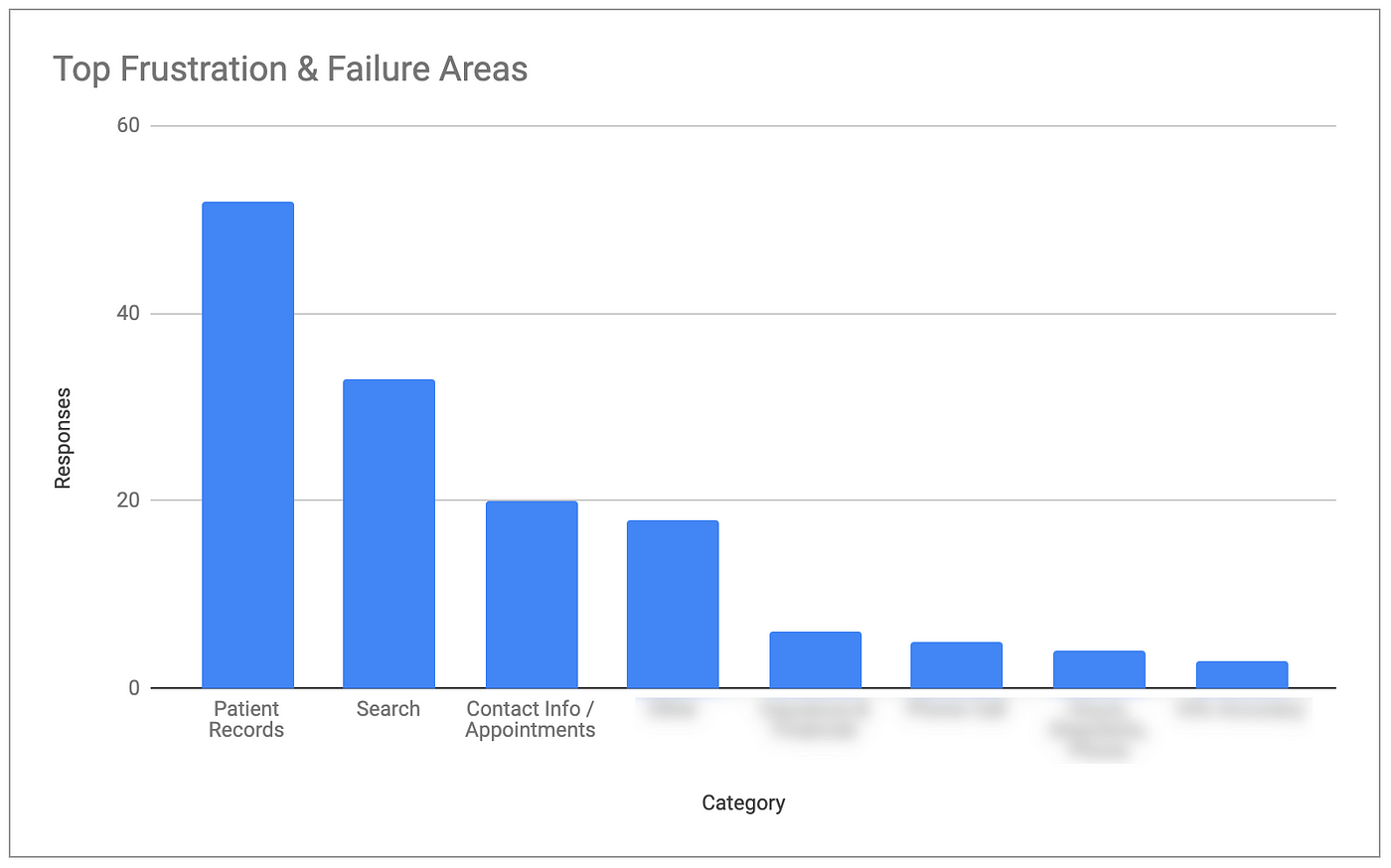

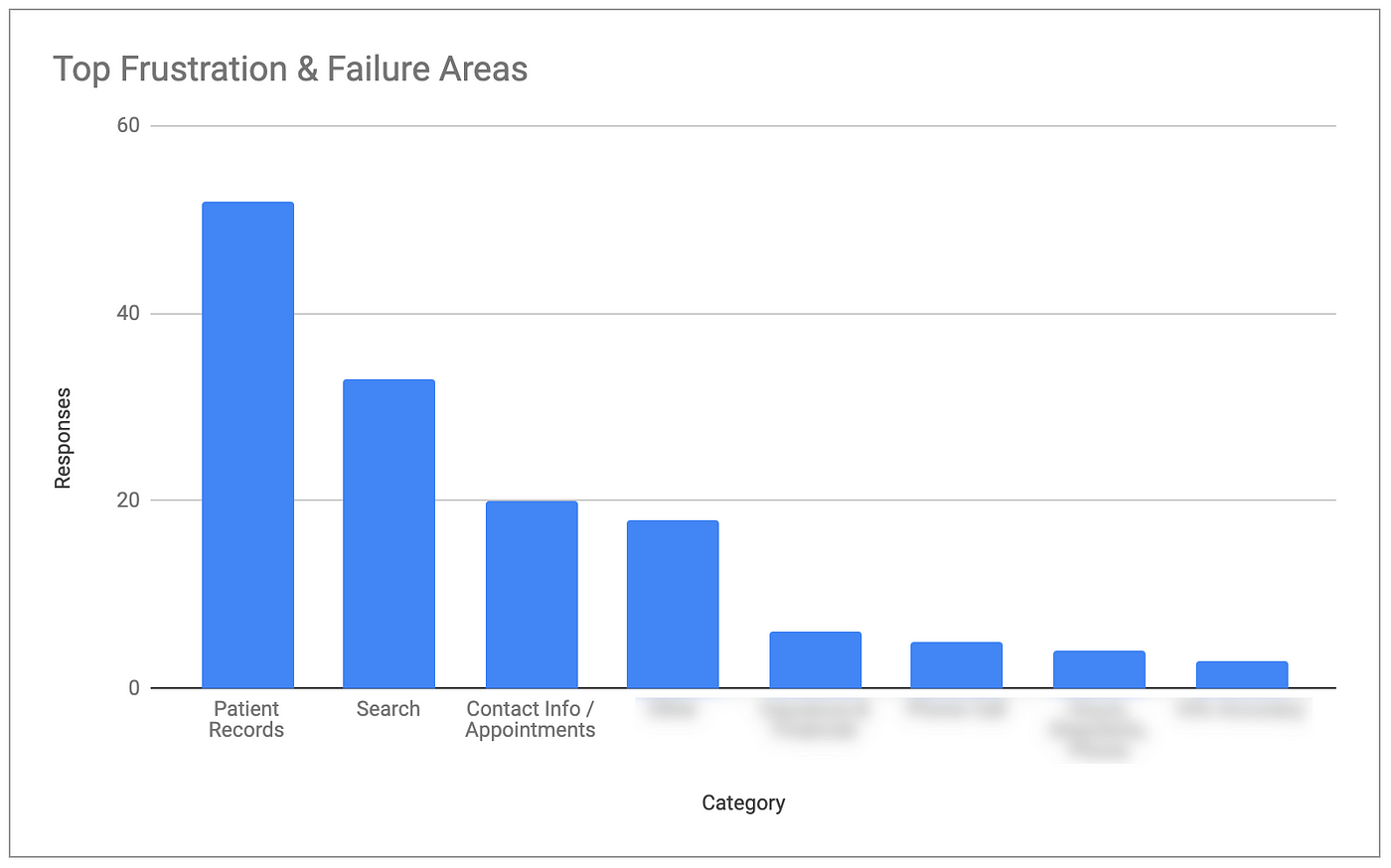

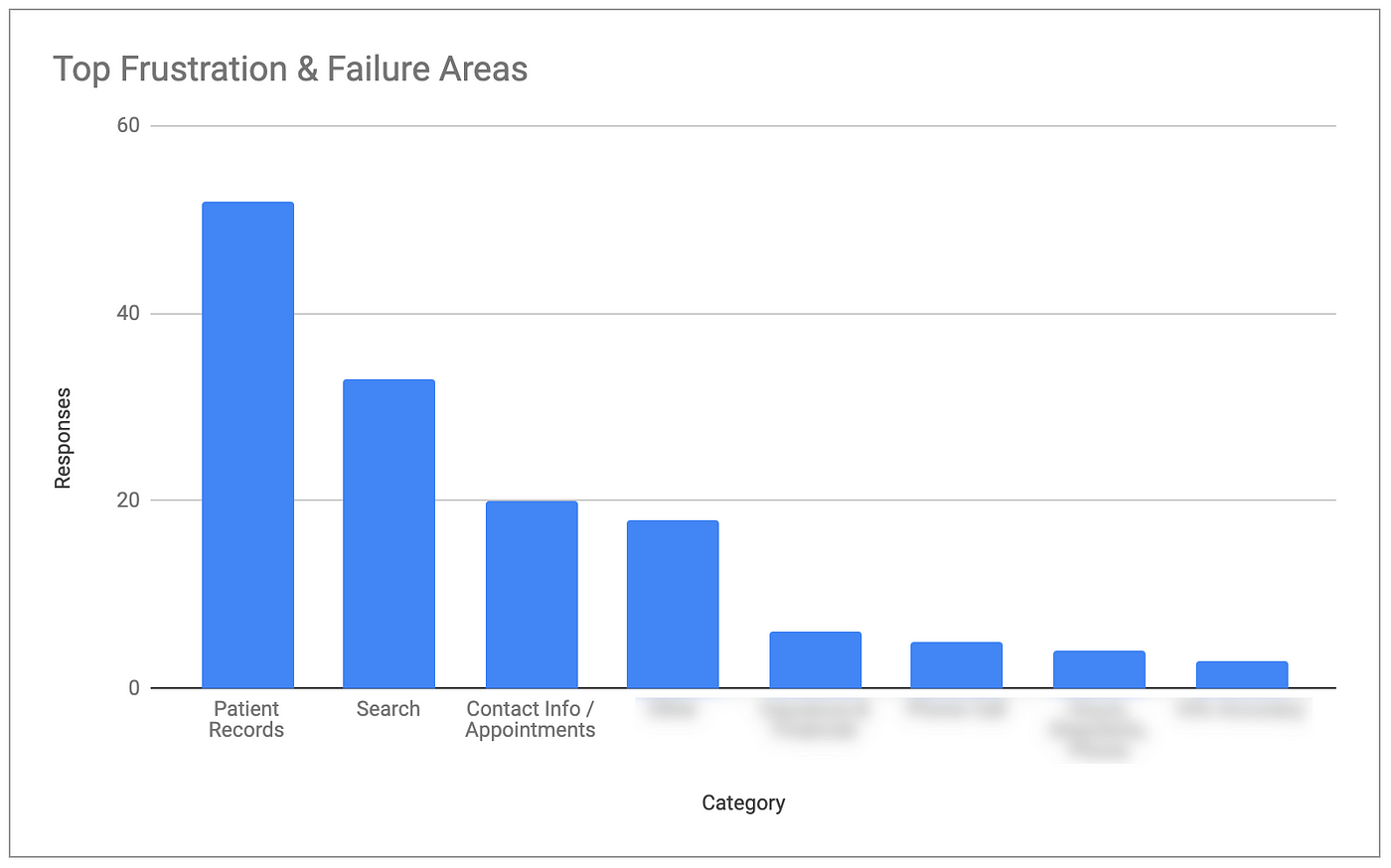

After our analysis was complete, we were able to identify the top task areas causing frustration and failure.

- The #1 area was Medical Records, in particular the task of trying to access or log into the records section of the site.

- The #2 area was Search, in particular the task of searching for a doctor.

- The #3 area was Contact Info & Appointments, in particular the tasks of making appointments online.

Survey data also showed that 46% of the respondents found the site easy to use; 22% found it difficult to use. This gave the team a baseline to measure future versions.

Step #3: Share

We shared these survey findings at a stakeholder workshop, just after the health system’s marketing team had watched recordings of the moderated usability sessions. After sharing the top frustration/failure areas, we showed direct quotes from each area that we captured in the survey.

While the biggest insights on this project came from qualitative testing, the survey allowed the team to:

- Establish and understand their benchmark usability score

- Validate/invalidate/quantify their qualitative findings

- Identify new UX issues that did not come up in moderated testing

A Surprising Insight

While surveys often help us find small UX issues that qualitative research failed to surface, rarely do we uncover major new issues. On this project, we did.

At the start of the project, the marketing team said that the patient records section of the site was out of scope. A different team owns that section of the site and it’s a hard section to change, we were told.

So we excluded records-related tasks from our moderated testing and ensured that users in the records section of the site did not get the survey popup.

Despite its exclusion, survey data showed Patient Records as the #1 website frustration area, by far. How was this possible? Because the specific problem was access: many users could not even get into that part of the site.

After the workshop, the attendees shared this survey finding with the team that owns patient records. They included powerful user quotes that supported the finding. It worked: motivated by this surprising insight, a project was launched to improve this critical website flow.

Keys to Success

Here were some of the keys to our success with these 2 website surveys.

1. We limited each survey to 2 questions.

Long surveys lead to low response rates and less representative data. As Jakob Nielsen says, “you can’t trust [survey] data if it doesn’t represent your users.”

2. We included an open-ended question.

It’s tempting to think you know the top possible answers to your “why” question or task question — and to give people a list of responses to choose from. It certainly makes the analysis cleaner and easier. Avoid the temptation, at least for the first round. You’ll often be surprised at the responses, and there is huge value in hearing about problems in your users’ own words.

3. We ran a pilot.

Because it’s so important to get a good response rate, and because every site is different, always test and iterate before launching the final survey.

4. We focused on user pain during analysis.

User frustration, problems, task failures. That’s where the biggest insights and opportunities come from.

5. We quantified qualitative survey data by categorizing and counting.

Categorizing open-ended responses is tedious, but it allows you to count, compare, and quantify insights.

6. We shared survey data and insights along with qualitative research.

Surveys should almost always be a supporting act. Once your stakeholders have qualitative observations in mind, survey data can help to validate or supplement those findings.

More Case Studies

Website Usability Testing with Patients for UVA Health

To improve its website experience for patients, we led mixed-methods usability research for this major regional health system with the #1 hospital in Virginia. After combining qualitative UX testing with 2 types of quantitative research, we led a 1-day analysis workshop.

Helping Mount Sinai Improve Cancer Patient Health Through Usability Testing

With a launch date looming, a team uses rapid UX testing to improve a web-based health app for oral cancer survivors and caregivers.

Usability Testing with Older Adults for a New AARP Digital Platform

A team at AARP was eager to increase adoption and engagement for a new life management platform. Marketade combined usability testing and a heuristic evaluation to identify UX improvement opportunities.